2026 AI Predictions

Another year in the books. Actually a quarter of the whole damn century is over if you can believe it. A lot has happened. Ghibli mania…remember that? Sora 2 was very fun for like three days. AI won the International Math Olympiad; that was unexpected by most. Google caught up. People are horny for Claude Code with Opus 4.5 currently. One of my predictions last year was full stack app creation. For a while it seemed like I was a bit overzealous, but a recent experience suggests we are basically there. Not in one shot, and not with complex architecture. But during a recent project, as soon as I gave it enough context with various command line interfaces (CLIs), Codex was able to work through a set of problems and construct all components of a basic IOS app. That’s not nothing.

Coding and math have progressed a lot this year. Writing has been stagnant though. Image and video models have become very good, now capable of coherent edits, text and basic graphic design, and character consistency.

An underrated dimension of coding models is their general agentic abilities. They can do a lot of stuff! Computer use struggles for very specific reasons. It’s more like a robotics task, demanding visual-spacial understanding to navigate GUIs. Models can do great and complex tasks with the command line but still have trouble seeing and clicking. An important distinction.

In my view, 2025 was mostly about setting up the infrastructure for mass market use of frontier AI. It was also a year where the industry became truly competitive. OpenAI is now in a tight race with Google and Anthropic, with xAI and Meta in hot pursuit. Google in particular has gained significant ground.

So, what will 2026 bring? I think the whole community following AI gets more grounded by the year. The way people speculated about OpenAI's secret Strawberry model back in late 2023 was a bit unhinged frankly. People didn't have a good sense of what was possible. Now I think a lot of us have our heads wrapped around the state of play, and perhaps where things are going.

The Compute Lump

Physical infrastructure is inherently lumpy. You don't get smooth increments of new GPUs. Capacity comes in batches. The massive Blackwell clusters that have been building out through 2025 will come online for real training runs in 2026. This suggests we might get a surge in capability, not a gradual slope. The labs have been somewhat compute-constrained this year while focusing on serving a fast-growing user base and productization. Perhaps next year we will see a bit larger percent go to advancing capability; it’s hard to say for sure though, the user base is not mature yet.

The Reasoning Paradigm Matures

One of the common critiques of current AI is sample efficiency. Models need vastly more data than humans to learn something. A child sees a few cats and understands the concept; a model needs millions of images. This is true of the old paradigm: predict the next token, scale up data and parameters. But reasoning models are fundamentally different.

Lukasz Kaiser is one of the authors of the original Transformer paper and now a lead researcher on reasoning at OpenAI. In a recent interview, he pointed out that "reasoning models learn from an order of magnitude less data." They're trained with reinforcement learning, they think for variable amounts of time, they call tools, verify their own work, and backtrack when they make mistakes. The learning process has already changed.

We're earlier in this paradigm than most people realize. Kaiser started working on reasoning models two years before o1 shipped. The paradigm is "only starting," he says, and "on a very steep path up."

Most of the gains we've seen recently have come from Reinforcement Learning with Verifiable Rewards (RLVR), or reinforcement learning on domains where you can verify answers. Coding and math have clear right/wrong signals, so you can run RL loops and the models get meaningfully better. More compute means more RLVR runs, on more domains, with more iterations.

Cheaper and more abundant inference changes the calculus somewhat. Right now people are selective about when to use reasoning models because of cost and latency. If that constraint loosens, you can throw extended thinking at more problems: every agent step, every verification, every subtask. That's a multiplier on top of raw capability gains.

Kaiser thinks reasoning models are "probably capable of doing most" computer-based work tasks already. They're just "quirky for now," missing training data in places, not yet polished. But with competition pushing all the labs to fill those gaps, and research improvements still in the pipeline, he expects "a very sharp improvement in the next year or two."

Software Engineering

I think this is going to be a special year for software engineering.

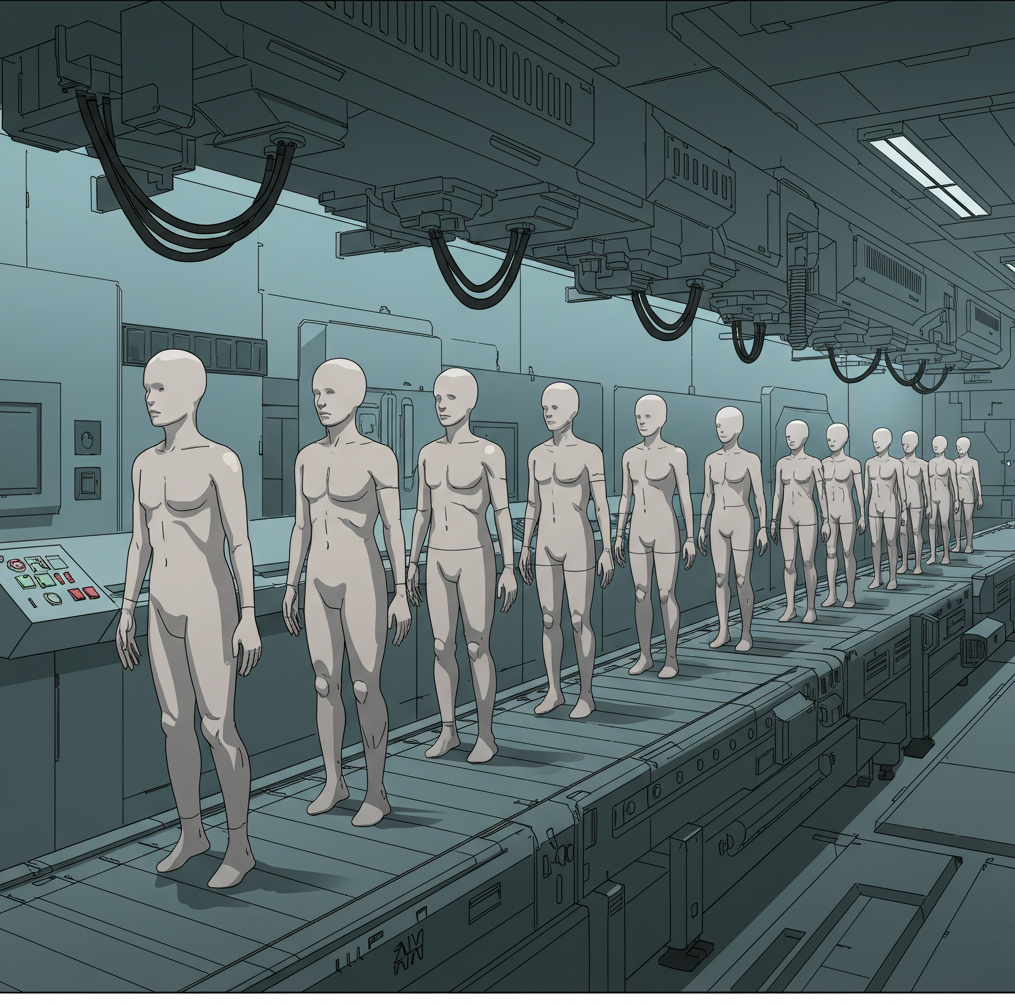

I think we’ll be firmly beyond the median human software engineer by the end of 2026. This sounds aggressive, but consider what a median software engineer actually does. Most of the job is routine: CRUD apps and bug fixing. The genuinely hard architectural work is a small fraction of total engineering hours. And engineers tend to work slowly and know only a few libraries.

The tools are already extremely fast compared to a (meatbag) human and know more languages and frameworks than any human. The gap is mostly managing context and reasoning ability.

But next year we will have much bigger models that can use a lot more inference to think. They will have undergone better RL in coding environments and there will likely be a patch work of approaches to improve ongoing memory needs. I think these systems will be extraordinary at coding by the end of the year.

Multimodal Integration

I expect 2026 to bring more integrated, truly multimodal models. More unified systems that reason across text, image, video, voice, and code. Technique advances plus the compute lump should enable this. Google's Nano Banana Pro points in this direction.

Image Generation

More polish, diminishing returns. The big improvement will likely be speed and cost.

Video Generation

Video generation still has a lot of room to grow. We might totally be out of the slop zone by year end. Under 30-second clips with solid direction-following, good coherence. And importantly, cheap enough to provide frontier access to entry level users on $20/mo plans.

Voice

I think this is still an underrated area of capability. We are still early in using synthetic voices for products and interfaces. I think this will blossom in 2026. I think the best models will be indistinguishable from a human, even in inflection.

Computer Use

This is a big one. I think Dwarkesh is wrong and computer use improves significantly. The end of year big chungus models will take a leap in visual intelligence I think. Which will be key for navigating complex software. Combined with kludgey improvements in tracking and expanding context. This type of GUI will be like a skeleton key for all the incredibly powerful software out there. Will a computer use agent be able to do basic video editing tasks by the end of 2026?

The Economy

I expect a surge in startups and apps building on these tools. The infrastructure is in place, the APIs are good, the costs are coming down.

Individuals will have insane leverage. A coding agent that builds apps, voice AI that handles calls, video generation for content, AI that navigates software on their behalf. A solo operator or small team can do what used to require a much larger organization. This works especially well for pure software products: social networks, platforms, SaaS tools, video editors.

On jobs more broadly: 2026 is probably another trust-building year. Business adoption has been slow and I imagine will continue to be slow. Maybe a modest uptick in unemployment.

AI Risk

I'm bearish on short-term rogue AI risk. Public companies don't release rogue AI. The incentives are completely misaligned with that outcome. OpenAI, Google, Anthropic: they're terrified of releasing something that goes off the rails. The reputational damage, the liability, the regulatory hammer.

Markets will thankfully help guide safety. The same pressure that's crushing the capability problem is also crushing the safety problem, because unsafe AI is bad for business.

Summary

2025 felt like loading the cannon. 2026, maybe some shots across the bow.

I’ve enjoyed writing regularly this year. Thank you for reading and happy new year!